Submarine Cable Chokepoints: Why 99% of International Data Depends on Fragile Routes

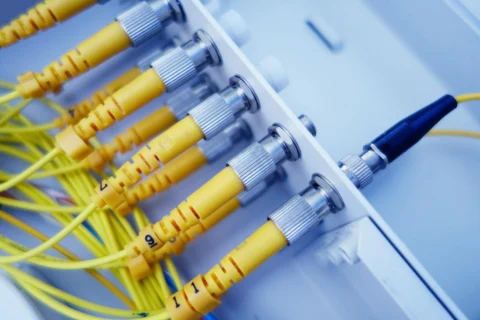

Subsea cables carry an estimated 99% of international data traffic, but conflict in the Red Sea and Strait of Hormuz has exposed how repair access can fail when critical corridors close.

Geopolitics & Conflict News & Analysis