The synthetic data bottleneck is one of the most consequential problems in artificial intelligence today. Every major AI language model, from ChatGPT to Llama, was built by consuming enormous quantities of human writing scraped from the internet: books, articles, forum posts, scientific papers. But that supply is finite. Researchers at Epoch AI estimate the total usable stock of human-generated public text at roughly 300 trillion tokens, and their projections suggest this stock will likely be fully consumed between 2026 and 2032.[s] When that happens, the industry faces a reckoning: scale down, or find another source of fuel.

The Synthetic Data Bottleneck Explained

Scaling has been the primary engine of AI progress for the past decade. Bigger models trained on more data consistently produce better results. Meta’s Llama 3, for example, was trained on over 15 trillion tokens, seven times more than its predecessor.[s] But the appetite for data is growing faster than humans can produce it. Text data fed into AI models has been growing roughly 2.5 times per year, while computing power has grown about 4 times per year.[s]

“There is a serious bottleneck here,” said Tamay Besiroglu, an author of the Epoch AI study. “If you start hitting those constraints about how much data you have, then you can’t really scale up your models efficiently anymore.”[s]

Making the synthetic data bottleneck worse, much of the web is now actively closing its doors. The MIT Data Provenance Initiative found that more than 28% of the most critical sources in common AI training datasets have been completely restricted via robots.txt, with restrictions surging since the fall of 2023.[s] The well is not just running low; it is being capped.

When AI Feeds on Itself: Model Collapse

The most obvious alternative to human data is synthetic data: text generated by AI models themselves. Companies are already doing this. Elon Musk declared in January 2025 that “the cumulative sum of human knowledge has been exhausted in AI training” and that models have turned to AI-generated information to keep improving.[s]

But training AI on its own output has a fundamental problem. A landmark 2024 study published in Nature demonstrated that when AI models are trained on data generated by previous AI models, they undergo “model collapse,” a degenerative process where the model progressively forgets rare patterns and edge cases in human language.[s]

Think of it like photocopying a photocopy. Nicolas Papernot, an assistant professor of computer engineering at the University of Toronto, put it simply: “You lose some of the information.” Worse, his research found that this process amplifies mistakes, bias, and unfairness already present in the data.[s]

The result is that language models trained this way begin to produce bland, repetitive, or outright nonsensical output. The diversity of human expression, the weird, the rare, the surprising, gets ironed out generation after generation.

Where Synthetic Data Actually Works

The synthetic data bottleneck does not mean all synthetic data is useless. In narrow, well-defined domains, it can work brilliantly. Google DeepMind’s AlphaGeometry system generated 100 million synthetic training examples by creating random geometric diagrams and exhaustively deriving every provable relationship within them.[s] The system solved 25 out of 30 Olympiad-level geometry problems, approaching the performance of a human gold medalist.

The critical difference: AlphaGeometry’s synthetic data was verifiable. Each generated proof could be checked by a symbolic engine. There was no ambiguity about whether the training examples were correct. This is why Epoch AI notes that synthetic data “has only been shown to reliably improve capabilities in relatively narrow domains like math and coding.”[s]

For general language tasks, that verification is impossible. There is no symbolic engine that can check whether a paragraph of prose is good, true, or interesting.

What Comes Next

The AI industry is not out of options, but none of the alternatives are clean substitutes. Some companies are pursuing licensing deals with publishers and platforms to access proprietary text. Others are experimenting with learning from images and video, though these modalities have not yet proven their value for training language models at scale.

Sam Altman, OpenAI’s CEO, has acknowledged the synthetic data bottleneck directly: “There’d be something very strange if the best way to train a model was to just generate, like, a quadrillion tokens of synthetic data and feed that back in. Somehow that seems inefficient.”[s]

Selena Deckelmann, chief product and technology officer at the Wikimedia Foundation, captured the strangeness of the moment: “It’s an interesting problem right now that we’re having natural resource conversations about human-created data.”[s]

The synthetic data bottleneck is not a future risk; it is an active constraint shaping how the next generation of AI models will be built. The models that navigate it well will be the ones that find ways to use synthetic data as a supplement to human knowledge, not a replacement for it.

The synthetic data bottleneck represents a fundamental scaling constraint for large language models. The core mechanism is straightforward: model performance has scaled reliably with training data volume, but the reservoir of human-generated text is finite and shrinking. Epoch AI’s peer-reviewed analysis estimates the effective stock of public human text at approximately 300 trillion tokens (90% CI: 100T to 1000T), accounting for data quality filtering and multi-epoch training.[s] Their 80% confidence interval places full utilization between 2026 and 2032.

The Synthetic Data Bottleneck in Scaling Laws

The urgency depends on how aggressively models are overtrained. Compute-optimal training (the Chinchilla recipe of ~20 tokens per parameter) would exhaust the stock around 2028. But modern practice favors overtraining: Meta’s Llama 3 8B was trained on 15 trillion tokens, roughly 94 times the Chinchilla-optimal amount for its parameter count.[s] At 5x overtraining, the stock depletes by 2027. At 100x, it may already be gone.[s]

Compounding the synthetic data bottleneck is active restriction by content providers. The MIT Data Provenance Initiative documented that 28% of the most critical sources in the C4 training dataset are now fully restricted via robots.txt.[s] This contraction began in earnest in fall 2023, when major AI companies started documenting crawler-specific User-Agent strings that content owners could block.

Model Collapse: The Mathematical Reality

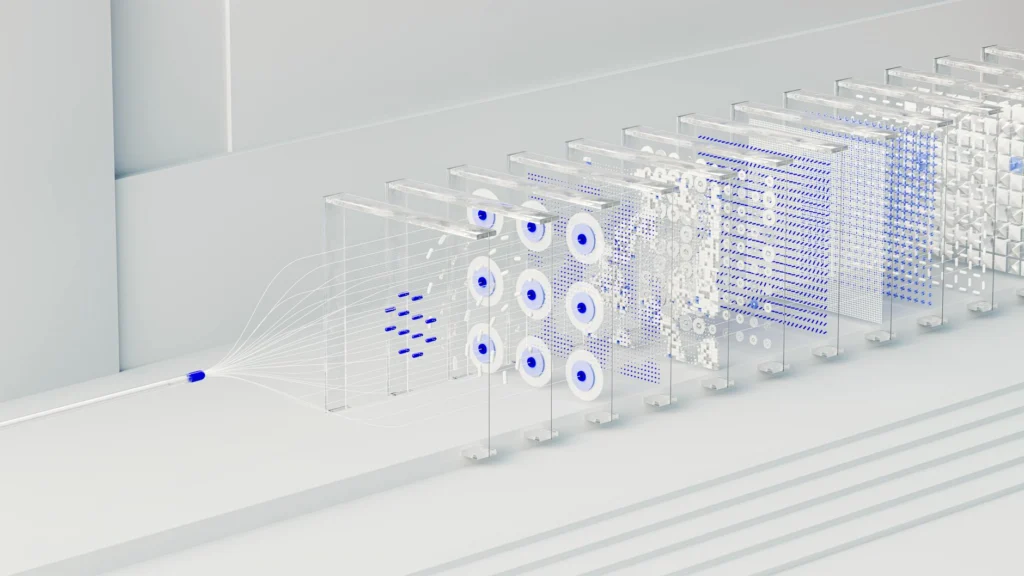

The 2024 Nature paper by Shumailov et al. formalized the phenomenon of model collapse with mathematical rigor.[s] The core result: when generative models are recursively trained on their own outputs, three compounding error sources drive progressive degradation.

First, statistical approximation error from finite sampling causes low-probability events (the “tails” of the distribution) to be underrepresented and eventually lost. Second, functional expressivity error arises because neural networks are only universal approximators in the infinite-width limit, introducing non-zero likelihood outside the true distribution’s support. Third, functional approximation error from optimization procedures (SGD bias, objective choice) compounds across generations.

The authors proved that for Gaussian approximations, the Wasserstein-2 distance between the nth-generation model and the original distribution diverges to infinity, while variance collapses to zero almost surely. In plain terms: the model’s view of reality narrows to a point, losing all meaningful variation. They demonstrated this collapse in GMMs, VAEs, and fine-tuned LLMs.[s]

The paper identifies an absorbing-state argument for discrete distributions: when all samples converge to a single value, the Markov chain reaches an absorbing state (a delta function), and “with probability 1, it will converge to one of the absorbing states.”[s] This is not a risk that better engineering eliminates; it is a mathematical inevitability of recursive self-training without fresh human data.

Synthetic Data That Escapes the Bottleneck

Not all synthetic data triggers collapse. The key variable is verifiability. Google DeepMind’s AlphaGeometry generated 100 million synthetic theorem-proof pairs by sampling random geometric premises, running a symbolic deduction engine to derive all reachable conclusions, then using traceback to identify minimal proofs.[s] The resulting proofs were machine-verifiable: each step followed from formal logical rules.

This approach solved the synthetic data bottleneck for geometry by exploiting a domain where correctness is decidable. The system solved 25 of 30 IMO-level problems, compared to 10 for the previous state of the art.[s] Similar success has been demonstrated in code generation, where synthetic programs can be verified by execution.

The pattern is clear: synthetic data works when you can filter or verify it against ground truth. For open-ended language generation, no such oracle exists. Epoch AI’s assessment is direct: synthetic data “has only been shown to reliably improve capabilities in relatively narrow domains like math and coding.”[s]

Navigating the Constraint

Several strategies are being explored to mitigate the synthetic data bottleneck. Undertraining (growing parameters while holding dataset size fixed) can deliver the equivalent of up to two additional orders of magnitude of compute-optimal scaling, but eventually plateaus.[s] Multi-modal training on images and video could expand the effective data stock, though text remains the primary modality for frontier capabilities. Data efficiency improvements, including better tokenization, curriculum learning, and mixture-of-experts architectures, reduce the tokens needed per unit of capability gain.

The Shumailov et al. paper offers one precise prescription: access to the original distribution is crucial. Their mixing parameter framework shows that collapse can be mitigated by maintaining a sufficient proportion of genuine human data in each training generation.[s] This makes authentic human-generated content an appreciating asset. As the authors conclude, “the value of data collected about genuine human interactions with systems will be increasingly valuable.”[s]

The synthetic data bottleneck will not halt AI progress entirely. But it will reshape the economics of the field, making high-quality human data a scarce commodity and rewarding companies that invest in verification, curation, and novel data generation strategies rather than brute-force scaling.