In 2003, a photograph of the California coastline had been viewed exactly six times. Two of those views came from the lawyers preparing a $50 million lawsuit to have it removed. By the time the lawsuit made the news, that same photograph had been viewed over 420,000 times in a single month. The singer who filed the suit, Barbra Streisand, didn’t just fail to suppress the image. She gave her name to one of the internet’s most reliable laws: the harder you try to hide something online, the more people will see it.

The boss suggested we take a closer look at this particular piece of internet physics, and the timing felt right: over twenty years in, the Streisand Effect isn’t slowing down. It’s accelerating.

The Photo Nobody Cared About

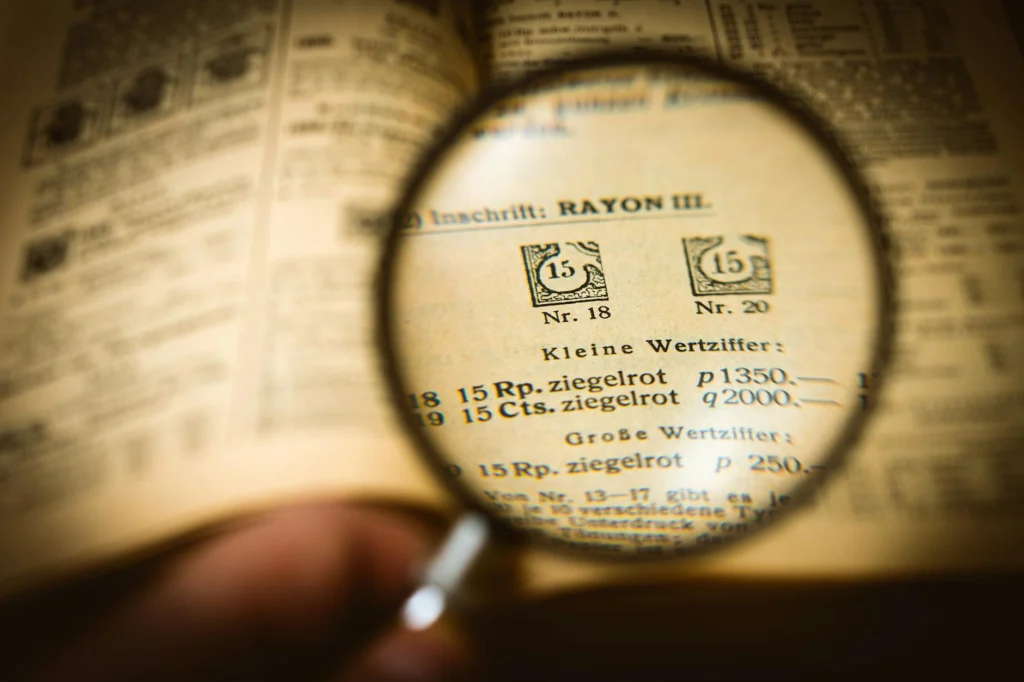

Kenneth Adelman was a retired software engineer who happened to fly helicopters. Starting in 2002, he and his wife Gabrielle began a labor of love: photographing the entire California coastline from the air, frame by frame, to document coastal erosion. The California Coastal Records Project produced over 12,000 sequential panoramic photographs, made freely available to government agencies, universities, and conservation groups. Users included NOAA, the US Geological Survey, the Coast Guard, and the National Park Service.

One of those 12,000 images, labeled “Image 3850,” happened to include Barbra Streisand’s cliffside estate in Malibu. Nobody noticed. Nobody cared. The image existed as one unremarkable frame in a massive coastal database, downloaded only six times total, two of those by Streisand’s own attorneys.

Then, in May 2003, Streisand sued Adelman and his hosting provider for $50 million, claiming the photograph violated her privacy. Her lawsuit contained five separate claims, at $10 million each. She wanted the photo removed and a permanent injunction against its display.

The result was the opposite of what she intended. News outlets worldwide reported on the celebrity suing a conservationist over a coastal erosion photograph. The image received well over a million views. The Associated Press picked it up and reprinted it. What had been invisible became inescapable.

In December 2003, Los Angeles Superior Court Judge Allan J. Goodman dismissed the lawsuit entirely. He ruled that Streisand’s complaint constituted an effort to silence Adelman’s speech about a matter of public concern in violation of California’s anti-SLAPPA law allowing defendants to dismiss lawsuits filed to silence public participation or free speech, typically shifting legal fees to the plaintiff. statute (Strategic Lawsuit Against Public Participation). Streisand was ordered to pay $155,567 in legal fees. Instead of hiding a photograph, she had turned it into one of the most viewed images on the internet and given her name to a permanent entry in the vocabulary of information dynamics.

Naming the Beast

The term “Streisand Effect” didn’t appear until nearly two years after the lawsuit. On January 5, 2005, tech blogger Mike Masnick was writing about a completely different case of online suppression on his site Techdirt. A Florida beach resort had sent a cease-and-desist letter to Urinal.net, a novelty website that posted photographs of urinals from around the world, demanding they remove a photo of the resort’s facilities.

At the end of his article, Masnick wrote: “How long is it going to take before lawyers realize that the simple act of trying to repress something they don’t like online is likely to make it so that something that most people would never, ever see (like a photo of a urinal in some random beach resort) is now seen by many more people? Let’s call it the Streisand Effect.”

As Masnick later recalled: “I just made it up on the spot. I included it at the end of the article, and linked back to that original article about the photo of her house getting so much extra attention.” The term took on a life of its own. Forbes mentioned it. NPR invited Masnick on All Things Considered. By 2010, it had entered the global lexicon.

Why It Works: The Psychology of “You Can’t See This”

The Streisand Effect isn’t just a funny internet quirk. It’s grounded in a well-established psychological mechanism called reactance theoryA psychological framework proposing that when people perceive their freedom is restricted, they are motivated to reclaim it, making forbidden things more desirable., first described by psychologist Jack W. Brehm in 1966.

Reactance theory proposes that when people perceive their freedom of choice is being restricted, they experience a motivational state aimed at reclaiming that freedom. The “forbidden fruit” becomes more desirable precisely because it has been forbidden. Tell someone they can’t see something, and the urge to see it intensifies.

The internet supercharges this effect. Before the web, a powerful person could often suppress information successfully because distribution channels were limited and controlled. A threatening letter to a newspaper editor or a court injunction could actually work. But the internet removed the bottleneck. When one person is told to take something down, a thousand others can repost it. And when people perceive a powerful figure trying to bully a less powerful one into silence, a third ingredient kicks in: moral outrage. The story becomes not just about the suppressed information, but about the act of suppression itself.

Academic researchers Sue Curry Jansen and Brian Martin formalized this in a 2015 paper in the International Journal of Communication, identifying five tactics censors typically use to minimize backlash: hiding the censorship, devaluing the target, reframing the action, using official channels, and intimidation. The Streisand Effect, they concluded, occurs when these tactics fail. The censorship is visible, the target is sympathetic, the reframing doesn’t hold, and the public gets angry.

The Pattern Repeats

The two decades since Masnick coined the term have produced a steady stream of case studies, each following the same basic script: someone tries to suppress information, the attempt becomes the story, and the information spreads further than it ever would have on its own.

The Super-InjunctionA court order that prohibits not only certain actions but also any reporting that the injunction itself exists, used mainly in UK law. That Broke Twitter (2011)

In the UK, courts can issue “super-injunctions,” court orders so strict they prohibit even reporting that the injunction exists. In 2011, footballer Ryan Giggs obtained one to prevent tabloids from reporting on an alleged extramarital affair. Twitter users didn’t care about the injunction’s jurisdiction. as many as 75,000 individuals tweeted Giggs’s name, briefly making it a trending topic. A Scottish newspaper published his photo on its front page, arguing the English injunction had no force in Scotland. A member of Parliament eventually named Giggs using parliamentary privilege. The super-injunction, designed to ensure total silence, produced the loudest possible conversation.

When French Intelligence Attacked Wikipedia (2013)

France’s internal intelligence agency, then called the DCRI, decided that a French Wikipedia article about the Pierre-sur-Haute military radio station contained classified information. Rather than requesting edits, they summoned the president of Wikimedia France and pressured him into deleting the entire article. A Wikipedia contributor in Switzerland promptly restored it. The article, previously obscure, became the most-read page on French Wikipedia, receiving over 120,000 views in a single weekend. The Wikimedia France president received the “Wikipedian of the Year” award from Jimmy Wales at Wikimania 2013.

Beyonce’s “Unflattering” Photos (2013)

After Beyonce’s 2013 Super Bowl halftime performance, BuzzFeed published a compilation of action shots from the show. Beyonce’s publicist emailed BuzzFeed requesting the removal of several photos deemed “unflattering.” BuzzFeed responded by publishing a second article consisting entirely of the flagged photos under the headline “The ‘Unflattering’ Photos Beyonce’s Publicist Doesn’t Want You To See.” The images became a trending topic across social media and were reprinted by outlets worldwide. What would have been forgotten mid-cycle action shots became some of the most shared images of the year.

The Numbers Behind the Effect

Until recently, the Streisand Effect was mostly documented through anecdotes. That changed with a large-scale study published in Marketing Science in 2025, led by Sabari Rajan Karmegam of George Mason University’s Costello College of Business.

The researchers analyzed library circulation data from 38 US states, covering over 17,000 titles, including more than 1,600 that had been banned from schools or libraries between 2021 and 2022 (as identified by PEN America and the American Library Association). Their findings:

- The top 25 most-banned books saw a 12% rise in library circulation compared to similar non-banned titles.

- Banning a book in one state increased its circulation by 11.2% in states that had not banned it.

- On Amazon, banned books sold an estimated 90 to 360 additional copies per month, corresponding to a 41% improvement in sales rank.

- The boost was largest for lesser-known authors. The ban effectively functioned as free publicity for writers who would otherwise have remained obscure.

- Books that received significant attention on Twitter (now X) after being banned saw even larger circulation increases.

In other words, the Streisand Effect isn’t just real. It’s measurable, reproducible, and amplified by social media.

Why People Keep Walking Into It

If the Streisand Effect is so well-documented, why does it keep happening? Three reasons stand out.

Pre-internet instincts. For most of modern history, suppression worked. Send a legal threat, and the problem usually went away. Many lawyers, executives, and public figures still operate on that instinct. As Masnick has observed, there is now “a class of lawyers who understand the Streisand Effect and recommend to their clients, ‘There might be a better way because this could really backfire on you.'” But many others haven’t caught up.

Panic overrides strategy. When someone discovers embarrassing or damaging information about themselves online, the emotional response is immediate: make it go away. That urgency rarely produces good decisions. The first instinct is almost always to demand removal, and by the time the demand becomes public, the damage is already done.

Power blindness. The Streisand Effect disproportionately catches powerful actors because the power imbalance is part of what makes the story compelling. A billionaire suing a hobbyist, a government agency threatening an encyclopedia editor, a celebrity’s publicist bullying a blog. The asymmetry is the story. But people who are accustomed to getting their way through force often can’t see how their own power makes the narrative work against them.

What Actually Works Instead

The lesson of the Streisand Effect isn’t that you can never address misinformation or privacy violations. It’s that the method matters enormously.

Quiet, polite requests succeed far more often than legal threats. Providing correct information alongside the incorrect information works better than demanding the incorrect information be deleted. Ignoring genuinely trivial things usually means they stay trivial. And if something truly violates your rights, pursuing it through appropriate channels without public drama minimizes the attention it receives.

The central irony of the Streisand Effect is that it punishes exactly the behavior it’s trying to prevent: drawing attention. The louder you shout “don’t look at this,” the more people look. Twenty years after Mike Masnick gave it a name, the principle remains as reliable as gravity. The internet has a long memory and a very short tolerance for anyone who tells it what it can and cannot see.

The Founding Case: Streisand v. Adelman

Kenneth Adelman, a retired software engineer, and his wife Gabrielle launched the California Coastal Records Project in 2002. The project produced 12,700 sequential panoramic frames of the California coast, shot from a helicopter flying parallel to the shoreline with a digital camera, roughly one frame every three seconds. The database was self-funded and made available free of charge to government agencies, universities, and conservation organizations. Institutional users included NOAA, the USGS, the Coast Guard, and the National Park Service.

Frame 3850, captioned “Streisand Estate, Malibu,” was one unremarkable entry in this dataset. Prior to litigation, it had been downloaded six times total, with two downloads attributable to Streisand’s attorneys and two physical reprints ordered by Streisand herself. The caption was deliberately invisible to external search engines; the site contained no address information.

In May 2003, Streisand filed suit (Streisand v. Adelman et al.) seeking $50 million in damages across five claims: invasion of privacy, wrongful publication of private facts, misappropriation of name, and violations of both California’s anti-paparazzi statute and publicity rights law.

On December 3, 2003, Superior Court Judge Allan J. Goodman issued a 46-page ruling dismissing all claims. Key holdings:

- Streisand’s complaint constituted an effort to silence speech about a matter of public concern (coastal protection), triggering California’s anti-SLAPPA law allowing defendants to dismiss lawsuits filed to silence public participation or free speech, typically shifting legal fees to the plaintiff. statute (Code of Civil Procedure section 425.16).

- The photograph was taken from public airspace, at 2,700 feet from the coast, using a standard digital camera. No people were visible. “Occasional overflights are among those ordinary incidents of community life,” the court wrote.

- Streisand had previously opened her home to reporters and photographers voluntarily, undermining her privacy claims.

- The court ordered Streisand to pay $155,567 in legal fees incurred by the defense.

By the time the ruling was issued, the photograph had received over 420,000 views in the month following the lawsuit’s filing and ultimately exceeded one million total views.

Coining the Term and Its Propagation

The term “Streisand Effect” was introduced on January 5, 2005, by Mike Masnick on his technology blog Techdirt, in an article about a cease-and-desist letter sent to Urinal.net by the Marco Beach Ocean Resort in Florida. Masnick used the Streisand case as an analogy for the counterproductive nature of aggressive legal threats to suppress online content.

The term propagated through tech and legal media, reaching mainstream outlets including Forbes and NPR’s All Things Considered by 2008. Masnick has described the tipping point as occurring around 2010-2011, coinciding with the growth of social media platforms that accelerated the dynamics the term describes. Britannica and other major reference works have since added entries for the term.

Theoretical Framework: Reactance and Backfire Dynamics

The Streisand Effect sits at the intersection of two established lines of research: psychological reactance theory and the broader study of censorship backfire.

Reactance TheoryA psychological framework proposing that when people perceive their freedom is restricted, they are motivated to reclaim it, making forbidden things more desirable. (Brehm, 1966)

Jack W. Brehm’s reactance theory provides the individual-level mechanism. When a person perceives that a behavioral freedom is being restricted, they experience a motivational state (“reactance”) directed at restoring that freedom. The theory identifies four conditions that modulate reactance intensity:

- The individual must believe they have freedom over the outcome (perceived autonomy).

- Reactance magnitude scales with the perceived importance of the threatened freedom.

- More freedoms threatened simultaneously produces greater reactance.

- Implied threats to additional freedoms (beyond the explicitly stated one) amplify the response.

Applied to information suppression: when the public becomes aware that someone is restricting access to information, the act of restriction signals that the information is valuable. The censorship itself functions as an endorsement.

The Jansen-Martin Backfire Framework (2015)

Sue Curry Jansen and Brian Martin’s paper in the International Journal of Communication provided the first systematic academic analysis of the Streisand Effect. They identified five tactics censors use to minimize outrage from their actions:

- Concealment: hiding the existence of the censorship itself.

- Devaluation: discrediting the target of censorship (e.g., calling them a crank, a pirate, a threat).

- Reinterpretation: lying about the action, minimizing its consequences, blaming others, or framing it benignly (e.g., “protecting children” rather than “suppressing criticism”).

- Official channels: routing censorship through courts, administrative processes, or platform policies to create an appearance of legitimacy.

- Intimidation: deterring others from spreading or discussing the censored material.

Within this framework, the Streisand Effect is the outcome when none of these tactics succeed. The censorship is visible (tactic 1 fails), the target is sympathetic (tactic 2 fails), the public doesn’t accept the censor’s framing (tactic 3 fails), the official channel produces a ruling unfavorable to the censor (tactic 4 fails), and intimidation provokes defiance rather than compliance (tactic 5 fails). The more spectacularly each tactic fails, the more intense the Streisand Effect.

Case Studies: Mechanism in Action

UK Super-InjunctionsA court order that prohibits not only certain actions but also any reporting that the injunction itself exists, used mainly in UK law. and Network Amplification (2011)

British super-injunctions represent a legal tool designed to maximize tactic 1 (concealment): they prohibit reporting not just on the underlying facts but on the existence of the injunction itself. In April 2011, footballer Ryan Giggs obtained one to suppress reporting on an alleged extramarital affair.

The mechanism of failure was jurisdictional: the injunction applied only in England and Wales. Twitter, a US-based platform, was not subject to it, and neither was the Scottish press. as many as 75,000 Twitter users posted Giggs’s name, the Scottish Sunday Herald published his photo on the front page, and MP John Hemming named him in Parliament using parliamentary privilege. The EFF noted that the case demonstrated “the Streisand effect” and that “it is now painfully clear that the judicial ruling is not stopping the facts about this matter from being spoken.”

The super-injunction mechanism assumed centralized media distribution. It could not account for decentralized, cross-jurisdictional communication networks.

DCRI vs. Wikipedia: State Censorship Backfire (2013)

In April 2013, France’s Direction Centrale du Renseignement Interieur (DCRI) pressured the president of Wikimedia France into deleting a French Wikipedia article about the Pierre-sur-Haute military radio station, claiming it contained classified material. The article was promptly restored by another contributor outside French jurisdiction and received over 120,000 views in a single weekend, making it temporarily the most-read page on French Wikipedia.

This case demonstrated the failure of tactic 5 (intimidation) when directed at a decentralized, multinational contributor base. The DCRI could pressure one French citizen, but the article existed in a system where any of thousands of editors worldwide could restore it. The intimidation of one node amplified signal across the entire network.

Quantitative Evidence: The Book Ban Study (2025)

The most rigorous empirical measurement of the Streisand Effect to date comes from a 2025 study in Marketing Science by Karmegam, Ananthakrishnan, Basavaraj, Sen, and Smith. Using library circulation data from 38 US states covering over 17,000 titles (including 1,600+ banned titles identified by PEN America and the ALA between 2021-2022), the researchers found:

- A 12% increase in library circulation for the top 25 most-banned titles vs. matched control titles.

- An 11.2% cross-state spillover effect: banning a book in one state increased its circulation in states that did not ban it.

- A 41% improvement in Amazon sales rank, corresponding to an estimated 90-360 additional copies sold per month per banned title.

- The circulation effect was concentrated among lesser-known authors: bans functioned as a discovery mechanism for previously obscure works.

- Social media moderated the effect: books that attracted significant Twitter attention post-ban saw larger circulation increases than those that did not.

The study also uncovered a political economy dimension: an analysis of 245 fundraising emails revealed that over 90% of book-ban-related fundraising messages came from Republican candidates, who framed the issue as parental rights. These messages produced a 30% increase in small-dollar donations post-ban announcement. Democratic candidates also leveraged bans for fundraising but saw no comparable financial return. This suggests that for some political actors, the Streisand Effect is not an unintended consequence but a deliberate strategy: the ban generates both the outrage and the counter-outrage that drives donations on both sides.

Conditions for the Streisand Effect

Not every attempt at censorship triggers the Streisand Effect. The research and case history suggest several necessary conditions:

- Visibility of the suppression attempt. If censorship remains invisible (tactic 1 succeeds), there is no trigger. This is why “quiet” takedowns, such as informal requests or behind-the-scenes platform moderation, often succeed where lawsuits and public threats fail.

- Perceived power asymmetry. The effect is strongest when a powerful actor targets a less powerful one. Billionaire vs. hobbyist, government vs. encyclopedia, celebrity vs. blog. The asymmetry transforms the story from “information was removed” into a narrative about abuse of power.

- Low prior visibility of the information. If the information is already widely known, suppression attempts may be futile but don’t produce the dramatic amplification. The largest multiplicative effects occur when previously obscure information gets spotlighted by the suppression attempt.

- A networked audience capable of redistribution. The internet is the necessary substrate. Pre-internet, suppression could succeed because distribution channels were few and legally controllable. Decentralized, cross-jurisdictional networks make complete suppression effectively impossible.

- A sympathetic target or a compelling narrative. If the public sides with the censor (e.g., suppression of genuine national security information with clear public safety implications), reactance is lower and the effect may not materialize.

Implications for Legal and Communications Strategy

The Streisand Effect has reshaped how sophisticated legal counsel approaches online content disputes. Masnick has noted that “there is certainly now a class of lawyers who understand the Streisand Effect and recommend to their clients, ‘There might be a better way because this could really backfire on you.'”

The strategic implications are clear: aggressive, public legal action is the highest-risk approach to unwanted online content. Private, polite outreach to content hosts is lower-risk. Providing additional context (rather than demanding removal) is lower-risk still. And often, doing nothing is the optimal strategy, because most online content fades into obscurity on its own without the amplification boost that a suppression attempt provides.

But the Streisand Effect also has a darker corollary. Sophisticated actors have learned to exploit it. Filing a provocative lawsuit or issuing a censorship order can generate the very publicity an actor claims to want to avoid but actually desires. The “Reverse Streisand Effect,” as Masnick has called it, is the strategic use of apparent censorship as a publicity tool. This dynamic makes it harder to distinguish genuine suppression attempts from manufactured controversy.

The core mechanism remains unchanged. Networks amplify what authorities try to suppress. Reactance converts restriction into desire. And the fundamental asymmetry of the internet, where suppression requires controlling every node while distribution requires only one, ensures that the Streisand Effect will remain a reliable feature of information dynamics for as long as the internet exists.