Survivorship bias is the logical error of drawing conclusions from an incomplete data set, one where the failures have been removed. You study the winners, find patterns in their behavior, and conclude those patterns caused the winning. The problem is that the losers, the ones you never examined, might have done exactly the same things. Abraham Wald figured this out while trying to keep bomber crews alive in World War II. The principle he uncovered applies to nearly every domain where humans make decisions based on evidence.

The Bomber Problem: Where Survivorship Bias Got Its Name

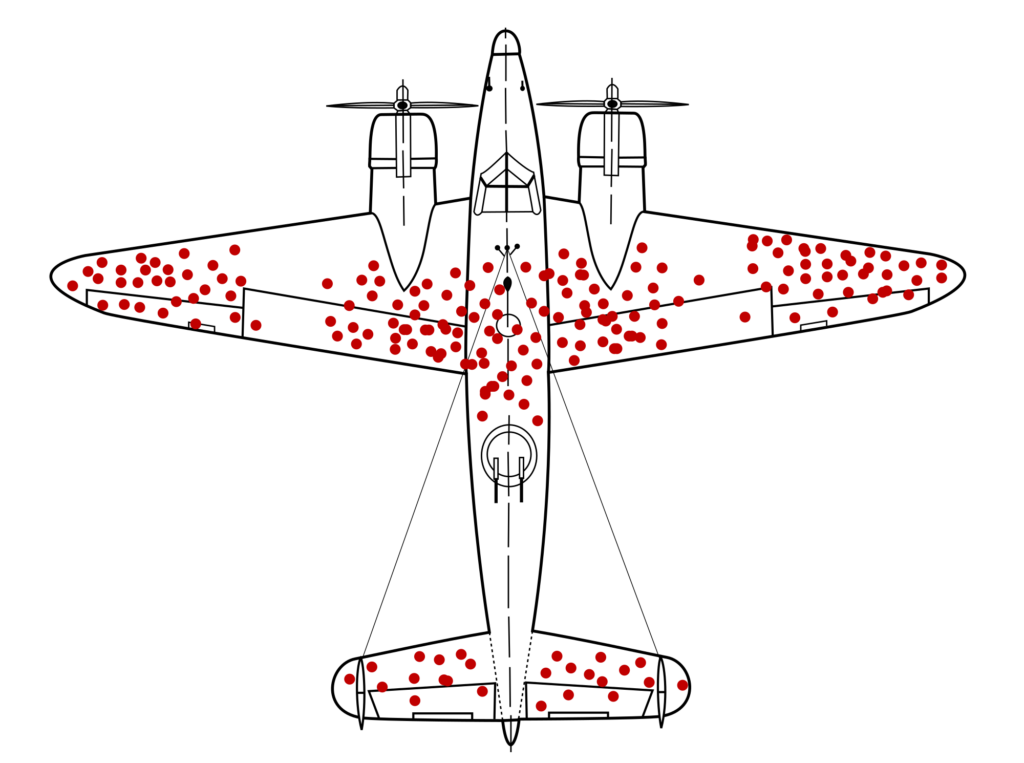

During World War II, the US military brought a statistician named Abraham Wald a problem. Allied bombers were returning from missions over Europe riddled with bullet holes. The military wanted to know where to add armour plating. The obvious answer was to reinforce the areas that showed the most damage: the fuselage, the wings, the tail gunner position.

Wald said the opposite. The planes they were examining were the ones that had survived. The bullet holes showed where a bomber could take damage and still fly home. The areas without damage, the engines, the cockpit, the fuel systems, were where the lost bombers had been hit. Those planes were not available for inspection because they were at the bottom of the English Channel.

The military had been looking at survivors and mistaking the pattern of survival for a map of vulnerability. Wald’s insight was that the missing data, the planes that never came back, contained the actual answer. The military added armour to the undamaged areas. Bomber losses declined.

This is survivorship bias in its purest form: the data you can see leads you to exactly the wrong conclusion because the data you cannot see is what matters.

Survivorship Bias in Business and Success Advice

Open any bestselling business book and you will find a study of successful companies. The author identifies shared traits among the winners: bold leadership, a willingness to take risks, a distinctive culture, early adoption of some technology. The implied lesson is clear. Do what these companies did and you will succeed.

The methodology has a gap you could fly a damaged bomber through. For the pattern to mean anything, you would need to also study companies that had the same traits and failed. If 1,000 companies took bold risks and 10 succeeded while 990 went bankrupt, “bold risk-taking” is not a success factor. It is a gamble where the survivors get book deals and the dead get forgotten.

Jim Collins’ Good to Great studied 11 companies that made a sustained leap in stock performance and identified common characteristics. Within a decade of the book’s publication, several of the “great” companies had collapsed or been acquired. Circuit City went bankrupt. Fannie Mae required a government bailout during the 2008 financial crisis. The traits Collins identified were descriptive of a moment, not predictive of durability. The companies that failed did not get a chapter explaining what went wrong.

Survivorship bias makes success advice feel more reliable than it is. The entrepreneur who dropped out of university and built a billion-dollar company gets a profile in Forbes. The thousands who dropped out and ended up in debt do not get profiles. The pattern is real; the conclusion drawn from it is not.

Survivorship Bias in Medicine and Research

Clinical trials can suffer from survivorship bias when patients who drop out of a study are excluded from the final analysis. If patients leave because the treatment is making them worse, removing them from the data makes the treatment look more effective than it actually is. This is called attrition bias, and it is a specific form of survivorship bias that medical researchers are trained to watch for.

Publication bias is another variant. Studies with positive results are far more likely to be published than studies with null or negative results. The published literature, the data set available to doctors making treatment decisions, systematically overrepresents what worked and underrepresents what did not. A 2017 analysis in the journal Biochemia Medica found that positive results accounted for an estimated 85% of published research, with negative or null findings systematically underrepresented.

The consequence is that expert disagreement in medicine is partly driven by a shared data set that is already distorted by survivorship bias before anyone begins analysing it. Two researchers looking at the same published literature are both looking through a filter that has already removed much of the evidence that might change their conclusions.

Survivorship Bias in History

History is written by the survivors, and not only in the political sense. The physical artifacts that survive the passage of time are not a random sample of what existed. Ancient Roman buildings still standing today were, by definition, the ones built well enough to last two millennia. Concluding that “the Romans built things to last” from surviving examples ignores the unknown number of Roman structures that collapsed within decades and left no trace.

The same applies to music, literature, and art. People who say that music was better in the 1970s are comparing the best-remembered songs from that decade, curated by fifty years of cultural filtering, against the entire unfiltered output of the current moment. The forgettable songs from 1975 are forgotten. The forgettable songs from 2025 are still in your streaming queue.

This creates a persistent illusion that quality declines over time. Each generation compares its full experience of the present, including everything mediocre, against a past that has already been edited down to the highlights. The conclusion that standards are falling is often a measurement artifact, not a real trend.

How Survivorship Bias Interacts With Other Cognitive Errors

Survivorship bias rarely operates alone. It compounds with confirmation bias, the tendency to seek information that supports what you already believe. If you believe that hard work leads to success and you only study successful people, you will find hard work everywhere and your belief will be confirmed. The hard-working failures are invisible.

It also interacts with anti-motivated reasoning, the tendency to reject conclusions that threaten your worldview. Acknowledging survivorship bias means accepting that much of what passes for evidence-based success advice is actually pattern-matching on an incomplete data set. That is an uncomfortable conclusion for anyone who has built a career on such advice, and discomfort is precisely what anti-motivated reasoning is designed to suppress.

The combination creates a feedback loop: survivorship bias supplies the misleading evidence, confirmation bias ensures you find it persuasive, and anti-motivated reasoning prevents you from questioning the whole setup. Breaking out of this loop requires deliberately seeking out the failures, the data that was filtered away, the companies that crashed, the treatments that did not work, the buildings that collapsed.

Recognising Survivorship Bias in Practice

The test is simple. When presented with a pattern derived from examining successful cases, ask: what happened to the ones that failed? If the answer is “we did not look at them,” the pattern is unreliable. If the answer is “there were no failures,” the claim is almost certainly wrong.

Survivorship bias is especially dangerous in domains where failure is invisible: businesses that shut down quietly, patients who leave a study, manuscripts that were never published, species that went extinct without being documented, ideas that were tried and abandoned without anyone writing about them.

The antidote is not cynicism. Some patterns really do explain success, and studying winners can generate useful hypotheses. The antidote is methodological discipline: test the pattern against the full population, including the failures, before concluding that it is causal. Wald did not ignore the surviving bombers. He used them to deduce what must have happened to the ones that were missing. That is the model.