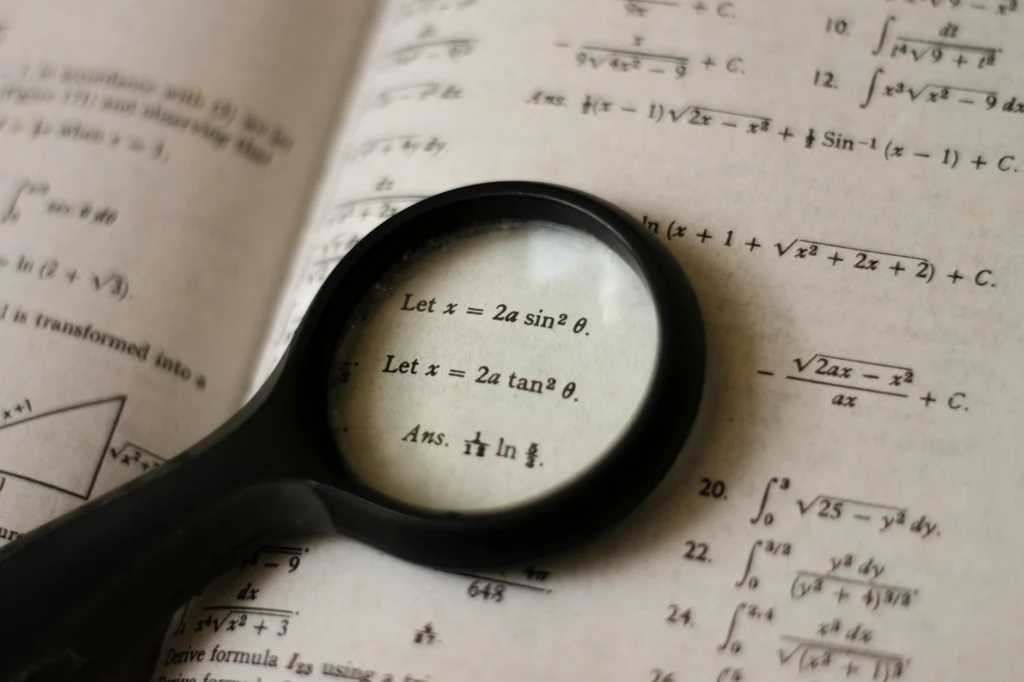

Every time you send a private message, log into your bank, or buy something online, your data passes through a gauntlet of mathematics designed to make eavesdropping practically impossible. The online encryption mathematics protecting these transactions relies on a simple but powerful idea: some mathematical operations are easy to perform in one direction but extraordinarily difficult to reverse. This asymmetry is the foundation of modern digital privacy, and understanding it requires no PhD, just a willingness to follow the logic.

The Lock-and-Key Problem: Why Online Encryption Mathematics Exists

Imagine you need to send a secret to someone you have never met, across a room full of people listening to every word. You cannot whisper. You cannot pass a note. Everything you say is heard by everyone. How do you share a secret under those conditions?

This is the fundamental problem of internet communication. Every message you send traverses public infrastructure: routers, cables, and servers operated by strangers. Before 1976, the only solution was for both parties to already share a secret code. Then two researchers, Whitfield Diffie and Martin Hellman, published a paper[s] that changed everything. They proved that two people could create a shared secret over a public channel without ever directly exchanging that secret. The math behind their method, and all the encryption built on it since, centers on so-called “trapdoor functions”: operations that are easy to do but hard to undo.

Multiplying Is Easy, Factoring Is Hard

The most famous trapdoor function in cryptography involves prime numbers. Multiplying two large prime numbers together is trivial for a computer. But given only the product, working backward to find the two original primes is staggeringly difficult. This is the foundation of RSA[s], the encryption system described by Ron Rivest, Adi Shamir, and Leonard Adleman in 1977.

Here is the concept stripped to its essentials. You pick two large prime numbers and multiply them to get a very large number. That large number becomes part of your public key, which anyone can see. But only someone who knows the two original primes can derive the private key needed to decrypt messages. The security rests entirely on the fact that no known algorithm can efficiently factor a sufficiently large number back into its prime components.

How large is “sufficiently large”? Modern RSA keys are typically 2,048 or 4,096 bits long. A 2,048-bit number has roughly 617 decimal digits. The best classical factoring algorithms would take longer than the age of the universe to crack one.

RSA is too slow for encrypting large amounts of data directly. In practice, it is used to securely exchange a smaller, faster key[s] that handles the actual bulk encryption. Think of RSA as the secure courier that delivers the real key, not the lock itself.

The Key Exchange: Sharing Secrets in Public

Before RSA, Diffie and Hellman solved the key exchange problem using a different mathematical trapdoor: the discrete logarithm problem. Their Diffie-Hellman key exchange[s] works like mixing paint colors.

Two people publicly agree on a starting color. Each secretly picks their own color and mixes it with the public one, then swaps the mixed results. Each person then adds their secret color to the mix they received. Both arrive at the same final color, but an observer who saw only the public color and the two mixed versions cannot work backward to find it. In mathematical terms, both parties compute a shared secret from publicly exchanged values, and the difficulty of reversing the math (computing discrete logarithms) prevents anyone listening from deriving that shared secret.

This technique, or modern variants of it, is used every time your browser establishes a secure connection to a website.

The Workhorse: AES Symmetric Encryption

Once two parties share a secret key, they switch to symmetric encryption for the actual data. The global standard is AES (Advanced Encryption Standard)[s], adopted by the U.S. government in 2001 after a five-year competition won by Belgian cryptographers Joan Daemen and Vincent Rijmen.

AES works by taking your data in 128-bit blocks (16 bytes at a time) and running each block through multiple rounds of scrambling: substituting bytes, shifting rows, mixing columns, and combining everything with pieces of the key. AES-128 uses 10 rounds, AES-192 uses 12, and AES-256 uses 14. Each round makes the relationship between the original data and the encrypted output more tangled and harder to reverse without the key.

AES is the first and only publicly accessible cipher approved by the NSA for top-secret information[s]. It is fast enough to run on everything from smartphones to server farms, which is why it handles the bulk of the world’s encrypted traffic.

Online Encryption Mathematics in Your Browser

When you visit a website with HTTPS (the padlock icon), your browser performs a TLS handshake[s]. This is where all the math comes together in a practical protocol:

- Your browser and the server agree on which encryption algorithms to use.

- The server proves its identity with a digital certificate.

- Both sides perform a Diffie-Hellman key exchange (or a variant using elliptic curves) to create a shared session key.

- All subsequent data is encrypted with AES using that session key.

The latest version, TLS 1.3, reduced the handshake from two round trips to one[s], cutting latency in half while eliminating older, weaker cipher options.

Forward Secrecy: Why Stealing Today’s Key Does Not Unlock Yesterday’s Messages

Modern messaging apps like Signal take encryption further with the [s]Double Ratchet algorithm[s]. Instead of using one key for an entire conversation, the Double Ratchet generates a new encryption key for every single message. If an attacker compromises one key, they can decrypt only that one message. Previous and future messages remain protected.

The algorithm achieves this by continuously mixing fresh Diffie-Hellman outputs into its key derivation chain. The result is forward secrecy (past messages stay safe if a key leaks) and what cryptographers call break-in recovery (future messages become secure again after a compromise). This protocol is used by billions of people[s] through Signal, WhatsApp, and other messaging platforms.

Elliptic Curves: Stronger Security With Smaller Keys

RSA keys need to be large to remain secure: 2,048 bits at minimum, with 4,096 increasingly recommended. Elliptic curve cryptography (ECC)[s] achieves equivalent security with dramatically smaller keys. A 256-bit elliptic curve key provides the same security as a 3,072-bit RSA key.

ECC is based on a different mathematical structure: the set of points on a curve defined by an equation like y2 = x3 + ax + b. Points on the curve can be “added” together following specific geometric rules. The trapdoor is that multiplying a point by a number (adding it to itself repeatedly) is fast, but given the result and the starting point, finding the number of times the operation was performed is computationally infeasible[s]. This is the elliptic curve discrete logarithm problem, and it is harder to solve than standard factoring, bit for bit.

Smaller keys mean faster computation and less bandwidth. This makes ECC the preferred choice for mobile devices, embedded systems, and the TLS connections securing your browsing right now.

The Quantum Threat and What Comes Next

Everything described above depends on the assumption that certain math problems are hard for computers to solve. Quantum computers threaten that assumption. In 1994, mathematician Peter Shor designed an algorithm that could factor large numbers in polynomial time[s] on a quantum computer, meaning RSA, Diffie-Hellman, and ECC would all be breakable.

No quantum computer capable of this exists yet. Current machines have roughly a thousand physical qubits, far short of the millions needed for error-corrected factoring of large numbers. But the threat is taken seriously because of “harvest now, decrypt later” attacks: an adversary could record encrypted traffic today and decrypt it once quantum hardware matures.

In August 2024, NIST released three finalized post-quantum encryption standards[s]. These algorithms, based on mathematical problems involving lattices (geometric structures in high-dimensional space), are designed to resist both classical and quantum attacks. NIST has also published a formal transition plan[s] urging organizations to begin migrating now.

The core idea has not changed: find a math problem that is easy one way and hard the other. The difference is that the new problems must be hard even for machines that exploit quantum physics. The mathematics evolves, but the principle endures.

Every private communication online, from TLS sessions to end-to-end encrypted messaging, is protected by a layered stack of mathematical primitives. The online encryption mathematics underlying these systems relies on computational hardness assumptions: problems in the complexity class believed to be outside P (and, for post-quantum schemes, outside BQP). Understanding this stack requires examining the specific trapdoor functions, their security parameters, and how they compose into real protocols.

Online Encryption Mathematics: Asymmetric Primitives and Their Hardness Assumptions

RSA: Integer Factorization

RSA[s], described by Rivest, Shamir, and Adleman in 1977 (and independently by Clifford Cocks at GCHQ in 1973), derives its security from the integer factorization problem. Key generation selects two large primes p and q, computes n = pq, and finds exponents e and d such that ed ≡ 1 (mod λ(n)), where λ is Carmichael’s totient function. The public key is (n, e); the private key is d. Encryption computes c ≡ me (mod n); decryption computes m ≡ cd (mod n).

The best known classical attack is the General Number Field Sieve (GNFS), which runs in sub-exponential time: Ln[1/3, (64/9)1/3]. For a 2,048-bit modulus, GNFS is computationally infeasible with current hardware. The largest RSA key publicly broken is 829 bits[s]. Current NIST guidelines recommend minimum 2,048-bit keys, with 3,072 bits for protection beyond 2030.

RSA’s throughput is too low for bulk encryption. In practice, RSA is used to transmit shared keys for symmetric-key cryptography[s], which handles the actual data encryption.

Diffie-Hellman: Discrete Logarithm Problem

The Diffie-Hellman (DH) key exchange[s], published in 1976, was the first practical public-key protocol. It operates in the multiplicative group of integers modulo a prime p with generator g. Alice computes A = ga mod p (secret a); Bob computes B = gb mod p (secret b). Both derive the shared secret s = gab mod p. The security rests on the Computational Diffie-Hellman (CDH) assumption: given g, p, ga mod p, and gb mod p, computing gab mod p is hard.

The CDH problem is no harder than the Discrete Logarithm Problem (DLP)[s]: if you can compute discrete logs, you can solve CDH by recovering a from ga. The index calculus family of algorithms (including Number Field Sieve variants) provides sub-exponential attacks on DLP in finite fields, requiring group sizes of at least 2,048 bits for adequate security margins.

Elliptic Curve Cryptography: ECDLP

Elliptic curve cryptography (ECC)[s], proposed in 1985, operates on the group of points on an elliptic curve E: y2 = x3 + ax + b over a finite field Fp. The group operation is point addition, defined geometrically by the chord-and-tangent rule. Scalar multiplication (computing nP = P + P + … + P, n times) is efficient via double-and-add. The reverse problem, the Elliptic Curve Discrete Logarithm Problem (ECDLP), finding n given P and nP, has no known sub-exponential classical algorithm.

The best general attack on ECDLP is Pollard’s rho, running in O(√n) time, which is fully exponential in the key size. This means a 256-bit ECC key provides equivalent security to a 3,072-bit RSA key[s]: a 12x reduction in key size for the same 128-bit security level. Standard curves include NIST P-256, Curve25519 (X25519 for key exchange, Ed25519 for signatures), and the Brainpool curves.

The asymptotic advantage of ECC over RSA grows with the security level. At 256-bit security, ECC requires 512-bit keys while RSA would require 15,360-bit keys. This makes ECC the dominant choice for TLS, SSH, and mobile applications.

Symmetric Encryption: AES and the Substitution-Permutation Network

AES (Rijndael)[s], standardized by NIST in 2001 as FIPS 197, is a substitution-permutation network operating on a 4×4 byte state matrix (128-bit blocks). It supports 128, 192, and 256-bit keys with 10, 12, and 14 rounds respectively. Each round applies four transformations:

- SubBytes: non-linear byte substitution via an S-box derived from the multiplicative inverse in GF(28) composed with an affine transformation. This is the cipher’s only non-linear component and its primary source of confusion.

- ShiftRows: cyclic left-shift of rows by 0, 1, 2, and 3 positions, providing inter-column diffusion.

- MixColumns: multiplication of each column by a fixed polynomial in GF(28)[x]/(x4 + 1), providing intra-column diffusion. Omitted in the final round.

- AddRoundKey: XOR with a round key derived from the key schedule via the Rijndael key expansion.

The best known attack on full AES-128 is the biclique attack at 2126.1 complexity, a marginal improvement over brute force (2128). AES remains the only publicly accessible cipher approved by the NSA for top-secret classification[s]. Its security margin is considered comfortable for the foreseeable future against classical adversaries. Against quantum adversaries, Grover’s algorithm reduces the effective security of AES-128 to 64 bits, which is why AES-256 is recommended for post-quantum resilience.

Protocol Composition: TLS 1.3

The TLS 1.3 handshake[s] (RFC 8446) composes the above primitives into a practical channel encryption protocol. The handshake completes in a single round trip:

- ClientHello: the client sends supported cipher suites, a random nonce, and (crucially) a key share for its preferred key exchange group (typically X25519 or P-256 ECDHE). By including the key share optimistically, TLS 1.3 eliminates one round trip compared to TLS 1.2.

- ServerHello + EncryptedExtensions: the server selects a cipher suite, responds with its own key share, and immediately begins encrypting. The server also sends its certificate and a CertificateVerify signature.

- Key derivation: both sides use the ECDHE shared secret as input to HKDF (HMAC-based Key Derivation Function) to derive handshake keys, then session keys for application data.

TLS 1.3 mandates forward secrecy by requiring ephemeral key exchange (no static RSA key transport). Every session generates fresh ECDHE keys, so compromising a server’s long-term private key does not retroactively expose past sessions. The only permitted AEAD ciphers are AES-128-GCM, AES-256-GCM, and ChaCha20-Poly1305.

End-to-End Encryption: The Double Ratchet

The Signal Protocol’s Double Ratchet[s] extends forward secrecy to the per-message level. It combines two ratcheting mechanisms:

- Symmetric-key ratchet: a KDF chain where each message key is derived from the previous chain key. Once a message key is used, the chain key advances and the old key is deleted. This provides forward secrecy within a single sending chain.

- Diffie-Hellman ratchet: with each message exchange, parties rotate their ephemeral DH key pairs. The DH output feeds into a root KDF chain that derives new sending and receiving chain keys. This provides break-in recovery: even if an attacker learns current chain keys, new DH ratchet steps introduce entropy the attacker lacks.

The combined effect: every message uses a unique key. Compromise of any single key reveals only that message. The protocol tracks message numbers and previous chain lengths to handle out-of-order delivery, storing skipped message keys (bounded by MAX_SKIP) for later decryption.

The Double Ratchet’s security properties, forward secrecy and post-compromise security, arise from the composition of the KDF chain’s one-wayness with the CDH assumption on the ratchet DH groups. Signal’s specification also includes a Sparse Post-Quantum Ratchet (SPQR)[s] for hybrid classical/post-quantum security.

The Quantum Threat and Post-Quantum Cryptography

Shor’s algorithm[s] (1994) factors integers and computes discrete logarithms in polynomial time on a quantum computer: O((log N)2(log log N)(log log log N)) gates for factoring N. This breaks RSA, finite-field DH, and ECDH. Grover’s algorithm provides a quadratic speedup for searching, halving the effective security of symmetric ciphers (AES-256 drops to 128-bit security, still adequate).

Current quantum processors (order of 1,000 physical qubits) are far from the millions of error-corrected qubits needed for cryptographically relevant factoring. But the “harvest now, decrypt later” threat model, where adversaries record ciphertext today for future quantum decryption, motivates immediate migration.

In August 2024, NIST finalized three post-quantum standards[s]:

- FIPS 203 (ML-KEM): Module-Lattice-Based Key-Encapsulation Mechanism (derived from CRYSTALS-Kyber). Primary standard for general key encapsulation. Security relies on the hardness of the Module Learning With Errors (MLWE) problem.

- FIPS 204 (ML-DSA): Module-Lattice-Based Digital Signature Algorithm (derived from CRYSTALS-Dilithium). Primary digital signature standard.

- FIPS 205 (SLH-DSA): Stateless Hash-Based Digital Signature Algorithm (derived from SPHINCS+). Backup signature standard based purely on hash function security.

NIST’s transition roadmap (NIST IR 8547)[s] calls for deprecating RSA, finite-field DH, and ECDH for key establishment by 2035. HQC (Hamming Quasi-Cyclic) was selected as an additional KEM candidate in March 2025, providing algorithmic diversity beyond lattice-based schemes.

The lattice problems underpinning ML-KEM and ML-DSA belong to a family of problems (LWE, MLWE, NTRU) that have resisted both classical and quantum attack for decades. Unlike factoring and DLP, no quantum algorithm provides exponential speedup against these problems. The mathematical hardness shifts from number theory to the geometry of high-dimensional lattices, but the architectural principle remains: one-way functions hard enough to bet civilization’s secrets on.